Ditching Stock

When stock photography was eroding audience trust, we built a smarter solution from scratch.

The Challenge

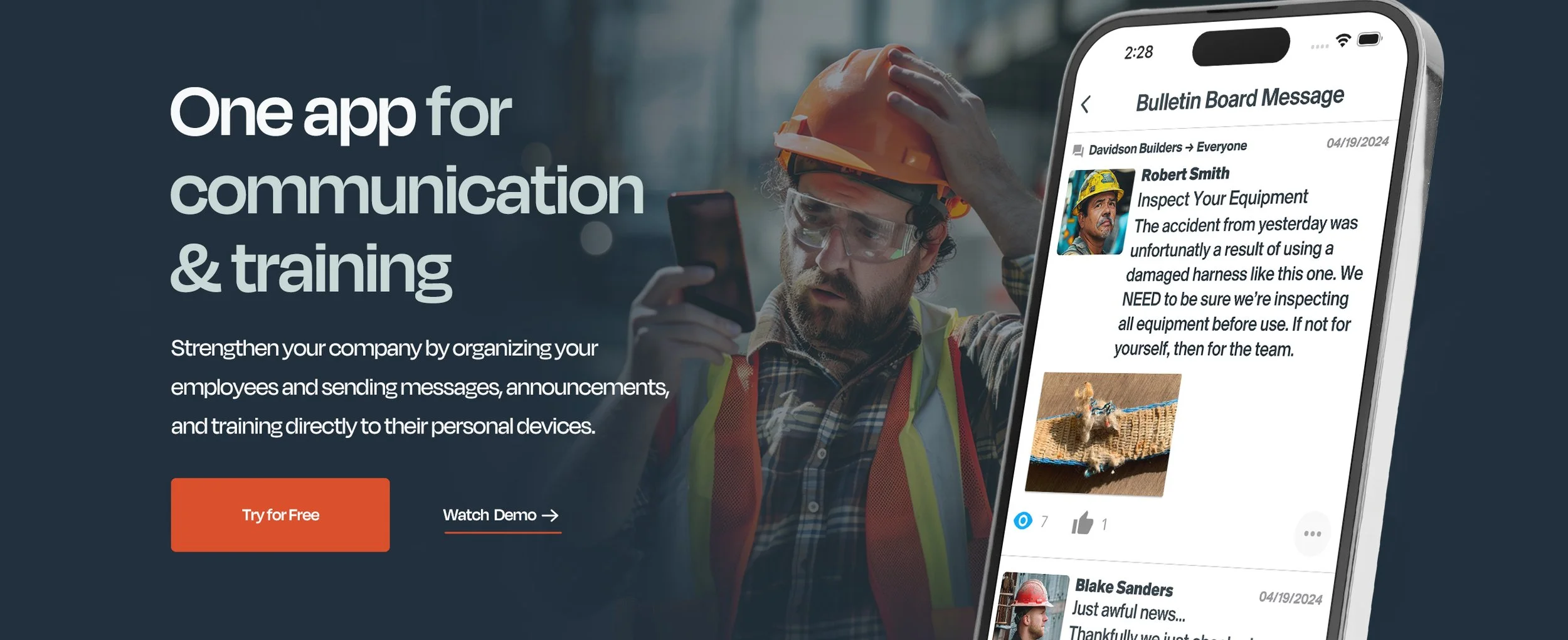

MindForge served the construction industry: Foremen, crew leads, project managers; people who work with their hands, wear hard hats, and spend their days on job sites. People that look nothing like a Getty Images search result.

Our marketing content told a different story. Spotless safety vests. Perfect lighting. Models who had clearly never been within fifty feet of a concrete mixer. The kind of stock photography that says we understand your world while proving the opposite.

The problem wasn't aesthetic. It was trust. Our audience was sophisticated enough to recognize performative imagery when they saw it. And when they did, it created a subtle but real distance between the brand and the people we were trying to reach. In an industry built on credibility and reliability, that distance mattered.

A dedicated photography budget and access to active job sites would have solved the problem cleanly. We had neither.

My Role

This was entirely my initiative. I identified the problem, researched the solution, pitched the approach to leadership, and executed it; producing a library of AI-crafted images that became the visual backbone of our blog content, website, and paid advertising.

No outside vendors. No dedicated budget. Just a recognition that the tools existed to solve a real problem, the conviction to make the case for using them, and twenty years of Photoshop experience to get the results over the finish line.

The Approach

The timing was right. AI image generation had matured to a point where, with careful prompting and curation, it was possible to produce imagery that felt grounded and authentic rather than synthetic and strange.

But anyone who has worked seriously with AI generation knows the gap between "close enough" and "actually usable." The technology is both remarkable and frustrating. Faces drift, proportions betray themselves, and anything involving hands, machinery, or complex interactions tends to fall apart under scrutiny. Generating a thousand images and picking ten good ones produces mediocre results. What it actually required was the same thinking that goes into any good art direction: understanding the audience, knowing what authentic looks like in their world, and making deliberate decisions about what to show and how to show it.

My advantage was twenty years of Photoshop experience. Rather than generating endlessly in search of a perfect output, I learned to identify images that were close enough to fix and then fix them. A construction worker shaking hands with a police officer sounds simple until the AI gives both men hard hats or outfits the foreman with a utility belt. Those aren't generation problems, they're editing problems. I'd composite multiple generations together, swap backgrounds, correct anatomy, and extend scenes until the final image looked like something a photographer may have actually captured on a job site.

Photoshop's own AI tools became part of the workflow too: spot corrections, background replacements, adding or removing elements with enough precision to change the meaning of an image entirely.

The result was a hybrid process: AI for the raw material, human craft for the finish.

The PPE Problem

One of the most important (and least obvious) challenges was safety compliance.

Construction sites have strict personal protective equipment requirements. Hard hats, safety glasses, high visibility vests, and even things like exposed rebar needing protective caps. These details are easy to overlook if you don't know to look for them, and both stock photography and AI generation overlook them constantly.

For our audience, those details weren't cosmetic. A foreman looking at an image of a worker without proper eye protection doesn't think: nice photo. He thinks: that's wrong! It breaks the authenticity we were working so hard to build. And in an industry where safety culture is taken seriously, it could undermine the brand's credibility entirely.

Every image I produced went through a safety audit before it was approved. Missing PPE got added. Exposed rebar got capped. Small details that nobody outside the industry would notice, but everyone inside would.

Where AI Reached Its Limits

We learned quickly that AI handles people far better than it handles machinery. Workers, foremen, crews in conversation, those could be coaxed into something believable with enough prompting and editing. Construction equipment was a different story entirely. Excavators, cranes, concrete mixers… the AI consistently produced machines that looked structurally wrong in ways that were difficult to articulate but immediately obvious. Proportions were off. Details invented. Controls in the wrong places.

For images that needed convincing heavy equipment, we went back to stock photography; but selectively and strategically. We'd find stock images with authentic-looking machinery and then composite our AI-generated workers into them, replacing the squeaky clean stock models with our grittier, more realistic figures. The best of both worlds: equipment that looked mechanically credible, people that looked like they actually worked for a living.

The workflow wasn't glamorous. But it produced images that served the brand in a way that neither pure AI nor pure stock photography could have done alone.

The Work

The resulting library replaced stock photography across our blog content, website pages, and paid advertising. Every image was scenario-specific rather than generic: a crew gathered around a tablet reviewing plans, a foreman taking photos of a job site hazard, workers watching training content in their car before a shift.

The imagery served the brand voice guidelines we were developing in parallel. Just as we were working to make MindForge sound like a real company talking to real people, these images made it look like one too. Visual and verbal authenticity working together.

The Outcome

The shift produced more authentic visuals that better reflected real-world scenarios. And the difference was visible to the people it was designed to reach. Content that had previously felt generic started feeling relevant. Comments on our organic social posts stopped grilling us on our authenticity and started engaging with the actual content.

The details mattered as much as the aesthetic. Every image passed a safety compliance check: proper PPE, correct equipment, authentic job site conditions. For an audience that lives by those standards every day, getting them right was the difference between imagery that felt familiar and imagery that felt fake.

It also changed how leadership thought about visual content. What started as a workaround for a budget problem became a deliberate creative strategy; one that gave us more control over how we depicted our audience than any stock library ever could.

What I Learned

This project reinforced something I believe about creative problem solving generally: constraints are often more useful than resources. We didn't have a photography budget. We didn't have access to job sites (for legal reasons). What we had was a problem worth solving and a willingness to find an unconventional path to solving it.

AI image generation gets talked about as a threat to creative work. My experience was the opposite. Used thoughtfully, it's a tool that extends what a small team can do, but only if the person using it understands what good looks like and why it matters. In the end, it wasn’t the technology that solved the trust problem, it was the thinking behind it.

Knowing where a tool breaks down is as valuable as knowing what it can do. The hybrid workflow we developed (AI for people, stock for machinery, Photoshop to bridge the gap) came directly from being honest about those limits rather than working around them.